📖 7 min read

Gemma 4 in LM Studio: What the Specs Say, What Early Users Report, and Why BetOnAI Is Doing a Hands-On Follow-Up

Google’s new Gemma 4 family is already getting treated like one of the most important local AI releases of the year. LM Studio has model pages up, Google is pushing it as its strongest open model family yet, and early local AI users are already arguing about whether it is the real Qwen challenger or just another benchmark darling that gets messy in the real world.

That is exactly why this article exists.

This is not a fake “we tested everything ourselves” review. This first version is a research-backed field report built from official product details plus early community and write-up signals. Then BetOnAI editorial will publish a second variant where we run Gemma 4 ourselves on real tasks and score what actually holds up.

So for now, the right question is not “Is Gemma 4 definitely amazing?”

📧 Want more like this? Get our free The 2026 AI Playbook: 50 Ways AI is Making People Rich — Free for a limited time - going behind a paywall soon

It is:

What do the specs say, what are early users reporting, and does it look promising enough to deserve a proper hands-on BetOnAI test?

What Gemma 4 is supposed to be

According to Google, Gemma 4 is its most capable open model family so far, built from Gemini 3 research and released under an Apache 2.0 license. Google is clearly positioning it as more than a lightweight chat model. The pitch is broader: reasoning, agentic workflows, code generation, longer context, multimodal support, and local deployment across a range of hardware.

LM Studio is echoing that positioning. Its Gemma 4 pages describe the family as capable of reasoning, tool use, and vision input, with model sizes ranging from smaller edge-oriented variants to larger local workstation models.

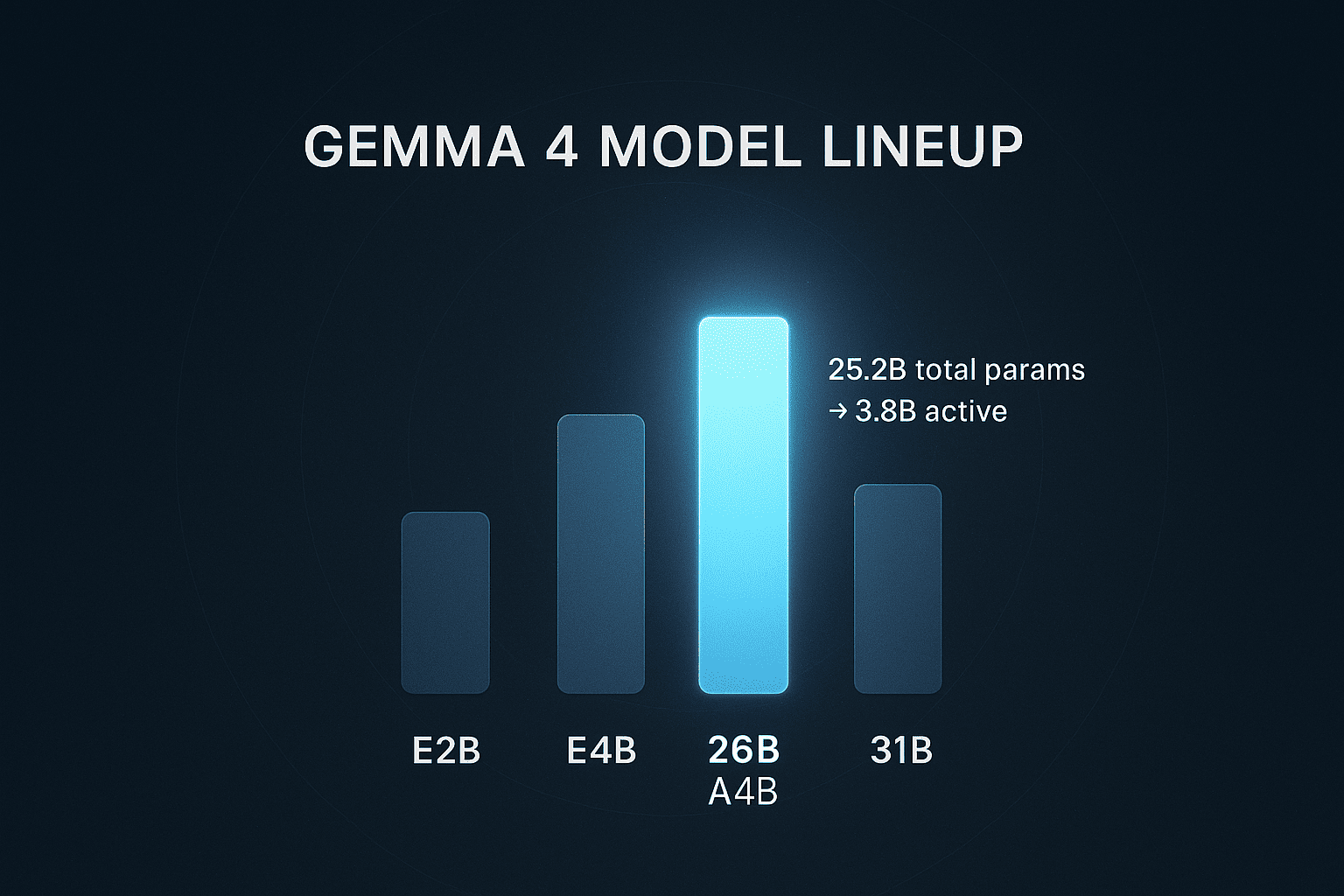

The lineup currently highlighted includes:

- E2B

- E4B

- 26B A4B

- 31B

LM Studio says the smallest Gemma 4 can run with roughly 4 GB of RAM, while the largest may require up to 19 GB. It also highlights context windows up to 256K tokens on larger variants, support for tool use, and availability in formats relevant to local use like GGUF and MLX.

That alone makes Gemma 4 interesting. Google is not just saying “here is another open model.” It is saying “here is an open model family that is supposed to be practical on real hardware.”

Why the 26B A4B model is getting the most attention

If there is one Gemma 4 variant that looks most interesting for local AI people right now, it is probably the 26B A4B Mixture-of-Experts model.

On LM Studio’s own model page, the 26B A4B variant is described as having about 25.2B total parameters but only about 3.8B active parameters during inference. That matters because it changes the economics of local AI.

In plain English, the model is much larger than a tiny local model, but it does not behave like a fully dense 26B model every time it generates a token. That is why people keep calling it a possible sweet spot: you get a lot more capability than a small dense model, but without paying the full speed and memory cost you would expect from a big dense system.

LM Studio’s own materials also show a strong benchmark story for the family overall. On its published table, the 31B model posts numbers like 85.2% on MMLU Pro, 89.2% on AIME 2026 (no tools), and 80.0% on LiveCodeBench v6. The 26B A4B model trails it only slightly on several of those metrics while still looking much easier to justify for local use.

That is the heart of the Gemma 4 pitch.

Not just “good open model.”

More like:

Maybe the first new Google open model release that serious local AI users might actually want to run instead of just benchmark once and forget.

What early users are reporting

This is where things get more interesting, because the early write-ups are not uniformly glowing.

One useful early article on running Gemma 4 locally with LM Studio described the 26B A4B as the practical sweet spot on a 14-inch M4 Pro MacBook Pro with 48 GB of unified memory, reporting around 51 tokens per second in that setup. That same write-up argues that the model offers a compelling balance of quality and local feasibility, especially if your goal is to reduce API dependence for tasks like drafting, code review, and prompt experimentation.

That is the bullish case.

Join 2,400+ readers getting weekly AI insights

Free strategies, tool reviews, and money-making playbooks - straight to your inbox.

No spam. Unsubscribe anytime.

There is also a more skeptical early reaction thread in broader community reporting. A DEV write-up summarizing early user feedback notes several concerns that matter a lot more in practice than they do in a launch blog post:

- inference speed concerns, especially versus Qwen-style competitors on similar hardware

- high VRAM/context pressure

- fine-tuning tooling friction shortly after release

- open questions about stability and maturity in real-world use

That is a meaningful split.

On one side, Gemma 4 looks like a big leap in intelligence-per-parameter and local usefulness. On the other, some early users are effectively saying: yes, but speed, memory behavior, and ecosystem maturity still matter, and this is not automatically a frictionless local winner.

What the X community is saying right now

To strengthen that picture, we also pulled supporting community signal from X using Sorsa API. Not as benchmark proof, but as a way to see what working users are actually repeating in public.

The biggest pattern is simple: the conversation is real, but mixed.

1. The launch buzz was genuine

A lot of the early excitement centered around:

- the 4-model lineup

- Apache 2.0 licensing

- immediate availability in LM Studio

- the feeling that Google had finally shipped an open model family serious local users might actually care about

2. People are talking about workflows, not just benchmarks

That matters.

Some of the more interesting posts were not benchmark screenshots. They were people trying Gemma 4 inside actual local setups, coding flows, and offline use cases.

That supports the idea that Gemma 4 is not being treated as just a curiosity. People are testing it as something that could replace at least part of an API-based workflow.

3. Speed is the most disputed issue

This is probably the single clearest community takeaway.

There are users reporting strong results on the right hardware. One recent post claimed around 75 tok/sec for a local Gemma 4 26B LM Studio coding task. Other users reported very usable performance on workstation-class GPUs.

But the opposite pattern is also showing up. Some users say the larger Gemma 4 variants feel slow on laptops or smaller machines. Others explicitly frame Gemma 4 as interesting but not yet the easiest everyday local choice.

So the community signal does not support a lazy headline like “Gemma 4 is blazing fast.”

It supports a more honest one:

Gemma 4 may be very good on the right hardware, but hardware fit still decides the experience.

4. Qwen is still the real rival in people’s minds

This is also important.

The public discussion is not really asking whether Gemma 4 beats old local models. It is asking whether Gemma 4 can beat or match Qwen 3.5 in the situations that matter most, especially on speed, practicality, and local coding usefulness.

That tells us where Gemma 4 sits in the market. It is not being judged as a novelty. It is being judged against the current local leaders.

5. LM Studio version and setup may matter more than casual readers think

At least one early LM Studio-side post specifically told users to update llama.cpp and noted that tool-calling performance would improve with LM Studio 0.4.9.

That matters because some early user impressions may be partially about setup maturity, not just pure model quality.

So, is Gemma 4 already the best local model in LM Studio?

Based on the evidence available right now, that would be too strong a claim.

What we can say more safely is this:

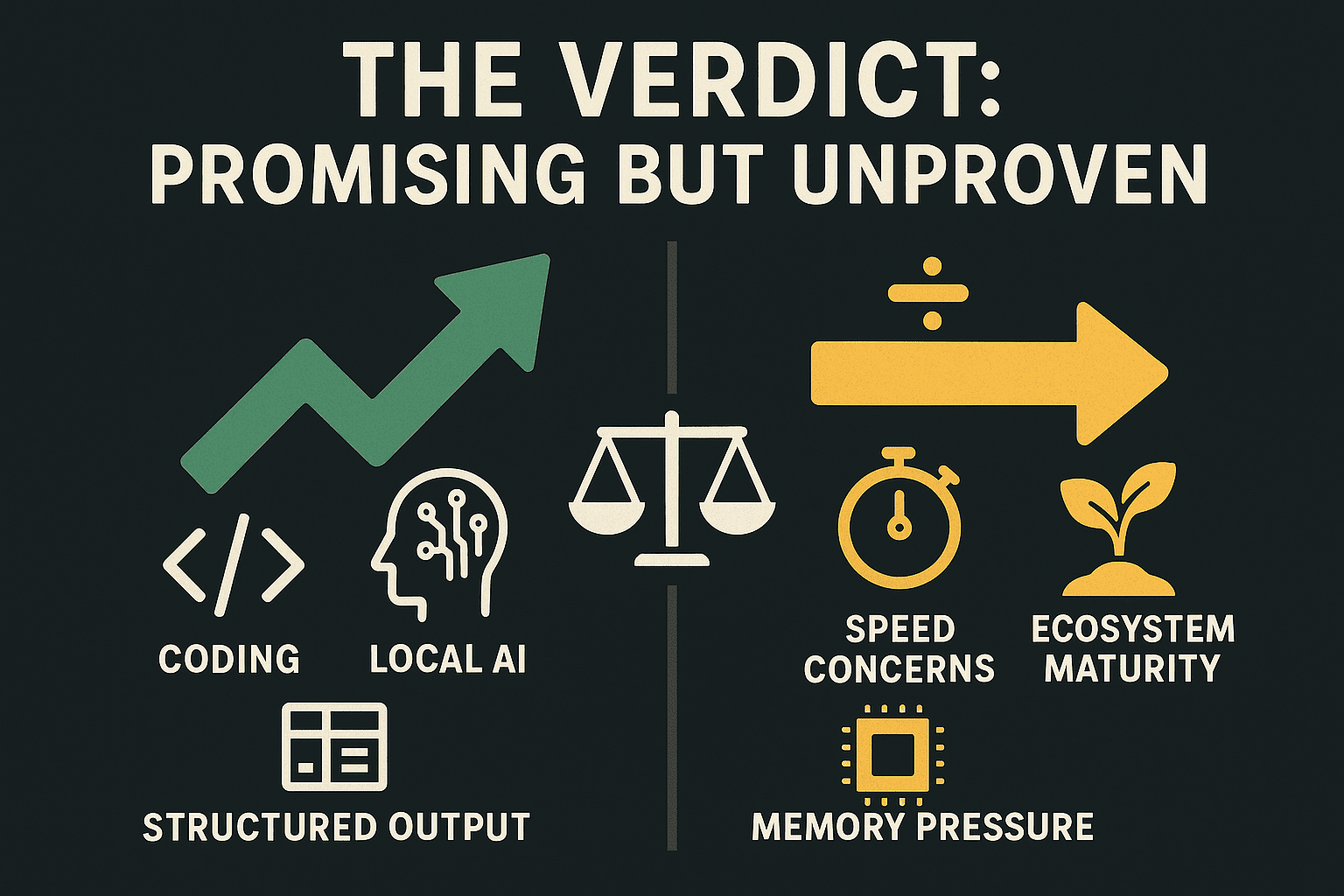

The bullish case

- Google’s official positioning is ambitious and unusually serious

- LM Studio is already treating Gemma 4 as a major local model family

- the 26B A4B design looks genuinely compelling for local inference

- early reports suggest at least some setups are getting very usable results

- coding, tool use, structured output, longer context, and multimodal support make it more than just another chatbot model

The skeptical case

- benchmark strength does not always equal smooth daily use

- some early reports suggest Qwen may still feel faster or easier in certain local environments

- memory/context behavior may be more demanding than people expect

- the local ecosystem around Gemma 4 still looks younger and less battle-tested than the headlines imply

That means Gemma 4 looks important, but not yet like a universal slam dunk.

The honest BetOnAI verdict, for now

Gemma 4 in LM Studio looks strong enough to take seriously.

It does not yet look like something we should crown the default local champion without doing our own work.

If you care about:

- lowering API costs

- keeping work local

- coding on-device

- structured outputs and agent workflows

- pushing long context on consumer hardware

then Gemma 4 absolutely looks worth watching, and probably worth testing.

But if you care most about:

- low-friction setup

- stable speed

- mature local tooling

- predictable performance across hardware

then it is still too early to pretend the decision is settled.

The right read today is:

Gemma 4 is one of the most interesting local-model releases of 2026, but the gap between official promise and daily reality still needs hands-on verification.

What BetOnAI editorial is doing next

This is Part 1 of the sequence.

This article is the research-backed version built from:

- Google’s official Gemma 4 release material

- LM Studio’s Gemma 4 model pages

- early user and write-up reporting on local performance, speed, and friction

- supporting X community signal pulled via Sorsa API

Part 2 will be the hands-on editorial test

In the follow-up piece, BetOnAI editorial will run Gemma 4 ourselves and score it on real tasks such as:

- coding help

- long-context summarization

- structured output / JSON tasks

- research assistance

- local replacement potential for specific API workflows

That second variant is the one where we answer the question people actually care about:

Can Gemma 4 in LM Studio do enough real work to justify the local setup, or is it still more hype than workflow?

For now, the fair conclusion is simple:

Gemma 4 looks promising, serious, and very worth watching, but not yet proven enough to call without a direct BetOnAI hands-on test.

Enjoyed this? There's more where that came from.

Get the AI Playbook - 50 ways AI is making people money in 2026.

Free for a limited time.

Join 2,400+ subscribers. No spam ever.